Proving the value of AI in customer support is still a challenge for many companies. At the beginning, the discussion almost always focuses on operational efficiency, cost reduction, and volume deflection.

The problem is that, at scale, AI changes not only cost. It changes the support growth model, the role of the human team, and the metrics used to assess performance and impact.

When AI moves beyond the pilot phase and becomes established as infrastructure, customer support stops operating like a production line and begins to function as part of the product and the strategy.

This text is about that transition, and about what it requires from operations, metrics, and the team.

Why can’t the value of AI be reduced to just “cutting costs”?

To sustain investment and scale AI, the case needs to go beyond immediate savings. At scale, AI changes the economics of customer service. It decouples growth costs and turns support into a lever for activation, retention, and customer value, not just a cost center.

In Brazil, customer service almost always grows the same way. More people, more BPO, and more fixed cost. That is why it is common to expect AI to cut spending quickly. The problem is that this reasoning treats AI as point-in-time efficiency software, when, in practice, it changes the way work is absorbed by operations.

When applied well, AI takes on part of the recurring volume and frees the human team to work where it has the greatest impact. Support stops growing in a straight line with the customer base and begins to sustain growth with greater predictability. The value shows up less in immediate cuts and more in the capability built over time.

What does it mean to ‘decouple support costs from growth’ in practice?

It is when revenue keeps growing, but the cost of service flattens, grows slowly, or even falls. This does not happen on day one. At the beginning, it seems like additional expense, because you are financing a new layer of AI while the old structure still exists. The gain comes over time, through friction, less replacement and automation that build up.

In practice, this decoupling happens through natural attrition, not through rupture. Open positions stop being refilled, BPO expansions are slowed down, and the internal team begins to absorb more volume without losing control. Each automated flow reduces a little of the future pressure on the operation.

Over time, these gains compound. Service starts to grow more slowly than the company as a whole, creating stability where there used to be only reaction. In a market like ours, where hiring and reducing structure is expensive and risky, this effect matters even more. It is not about replacing people, but about avoiding repeating the same structural growth at each new stage.

Which legacy metrics get distorted in an AI-first model?

Metrics such as average handling time (AHT), volume of resolved cases, and first contact resolution (FCR) stop reflecting value when AI takes on the straightforward volume. When humans are left with complex, sensitive, and emotional cases, AHT tends to rise and FCR to fall. Because of complexity, not because the team is performing worse.

In a traditional model, reducing AHT and increasing FCR are usually clear signs of efficiency, but in an AI-first model, that reasoning no longer works. For that reason, measuring productivity only by speed or handled volume starts to distort reality and requires new comparison benchmarks.

How do you measure the human team's performance when AI becomes the front line?

When AI handles the first contact, the human team's role becomes resolving exceptions and improving the system. Metrics need to reflect this shift, showing where human effort is going, whether the handoffs (transfers from AI to human) are being resolved without recontact, how much of the human work becomes customer service improvement, and which skills the team is developing.

With AI filtering and resolving a significant portion of the volume, the focus shifts to resolving cases that require judgment, empathy, and decision-making, as well as fixing automation failures when they appear. And that changes what should be measured.

How do you measure AI agent performance (without confusing deflection with resolution)?

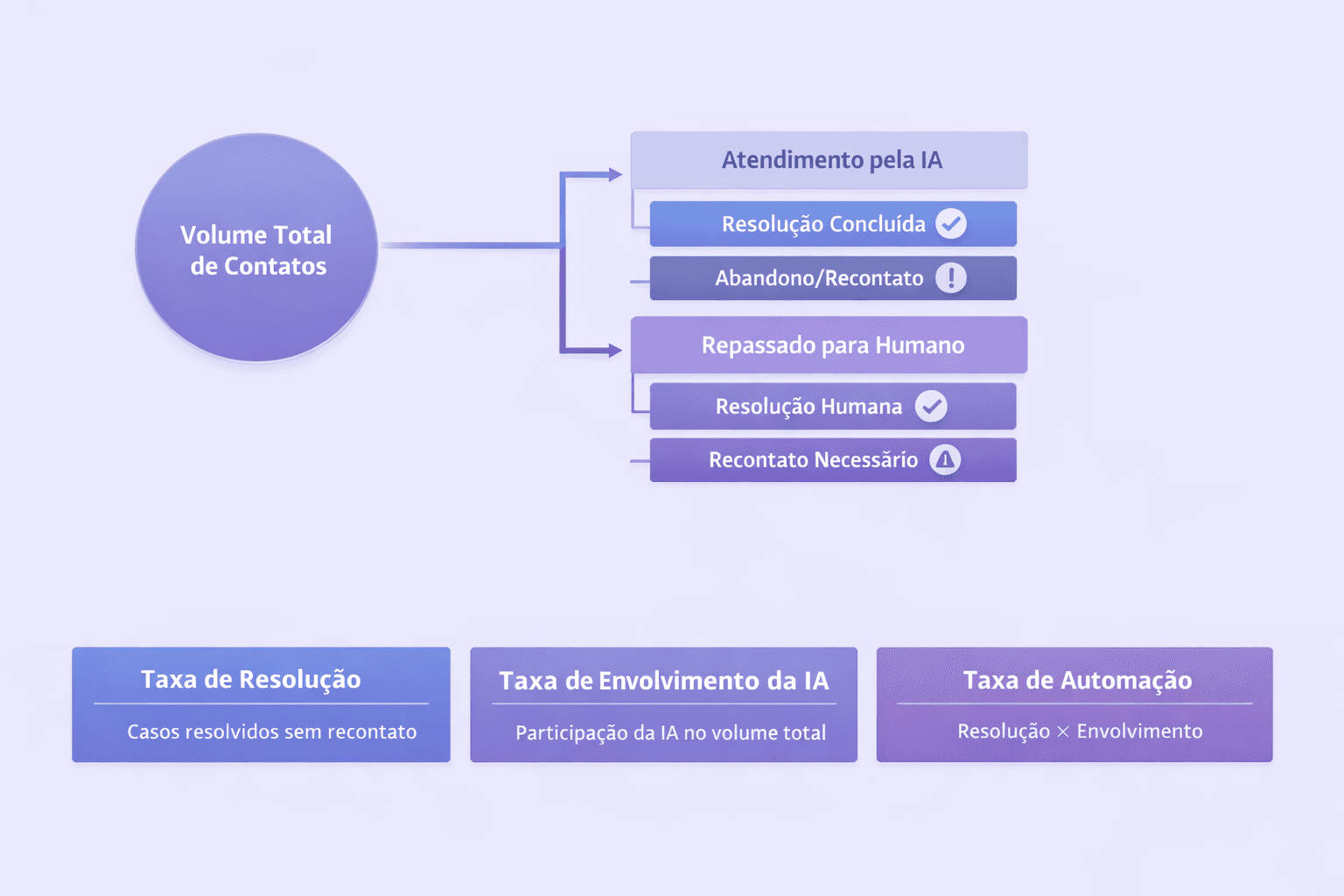

In AI, “deflection” (when the contact doesn’t reach a human) is an initial signal, but it can be misleading. Here, what really matters is resolution. To measure the agent at scale, the core model combines three metrics: resolution rate, AI engagement rate, and automation rate (resolution × engagement).

Deflection alone can mask problems. A customer may leave the automated flow without help and come back later, more dissatisfied. For this reason, the core metric becomes the resolution rate, which shows how many interactions were completed by AI without human intervention and without recontact.

This metric makes even more sense when combined with how much AI participates in the total volume of interactions. Combining these two factors indicates not only whether AI resolves issues, but where it operates and how much work it actually absorbs. Together, these metrics avoid superficial readings and help understand the real impact of automation in customer support.

How do you measure the customer experience if CSAT covers so little?

CSAT (satisfaction survey) tends to cover a small fraction of conversations and catch extremes. Deflection also doesn't guarantee that the issue was resolved. At scale, the most reliable way is to measure two things in every conversation: did the customer get the help they needed? and how did they feel? AI makes it possible to analyze 100% of interactions and find patterns in what's failing.

With the increase in volume and automation, survey response rates drop and bias increases. Many customers simply do not respond, while others only do so when the experience was very good or very bad. This limits the use of CSAT as a single quality indicator.

The advantage of an AI-powered model is the ability to analyze all conversations, not just a sample. Evaluating whether the customer got help and what the sentiment was throughout the interaction makes it possible to identify friction patterns in real time. These signals help correct flows, anticipate problems, and improve the experience before dissatisfaction turns into a repeat contact or churn.

How to connect customer service to business results (what the CFO wants to see)?

At first, AI is justified by hours saved and hires avoided. At scale, leadership wants the bigger story: how does support influence retention, conversion and growth? This involves modeling impact over time in cost reduction, revenue influence, churn prevention, and the feedback loop for product.

At first, the case usually centers on hours saved and hires avoided. That helps, but it does not sustain expansion. The next step is to show how support influences broader outcomes, such as customers who activate faster after having issues resolved or who stay in the customer base longer.

It also matters to observe how support reduces avoidable churn, improves feature adoption, and generates actionable feedback for product. When these effects are tracked consistently, support is no longer seen only as an operational cost and comes to be recognized as part of the company's growth engine.

How do you capture real ROI by combining budgets (people, BPO, and software)?

AI changes the budgeting model because it takes on work that was previously split between in-house teams, outsourcing (BPO), and tools. To capture ROI, it is not enough to “count savings.” It is necessary to reallocate the budget according to the new operating model, slow down hiring, reduce BPO, reassign people to system roles, and move spend from services to technology when it makes sense.

In practice, the return does not appear only as a direct cost reduction. It emerges when hiring slows down, vacancies stop being refilled, and outsourced volume begins to fall. In Brazil, where BPO often represents a large share of the budget, renegotiating based on automated volume can be a real lever.

At the same time, part of the budget tends to be redirected. People begin to work on system improvement, content, quality, and AI operations, while spending gradually shifts from services to technology. ROI appears when these moves are analyzed together, not as isolated line items.

Where should I reinvest the gains to create a compounding effect?

The real value of AI appears when its gains are reinvested to improve journeys, strengthen knowledge, create AI operations roles, and design better experiences. Without reinvestment, automation tends to stagnate and the experience stops evolving.

Saved hours, unfilled positions, and BPO reduction function as “operational dividends”. The risk is treating these gains only as cuts and not as opportunities.

When properly directed, these resources strengthen journey design, knowledge quality, the creation of roles focused on AI operations, and the continuous improvement of service. Over time, the capacity created accumulates and begins to generate new gains on an ongoing basis, turning AI into customer service infrastructure rather than a one-off project.

What does “AI at scale” look like in 6–12 months (and what changes on the team)?

At scale, AI stops being a tool and becomes infrastructure. It absorbs a large share of the volume, including more complex flows, and changes the human role. The focus stops being closing tickets and shifts to designing the system, training, reviewing, analyzing failures, and deciding where effort is worth investing.

This changes structure, rituals, and the relationship between support, product, and business. Support stops being just reaction and becomes treated as a living system that evolves based on data, experience, and real impact.

At Cloud Humans, this directly aligns with the reason the company exists. The idea from the beginning was never to scale support like a production line, but to create a model in which support was part of the product and the strategy.

AI enters this design as a way to sustain growth without losing clarity, control, and predictability, not as a shortcut to cut costs. When support is born with this role, using AI at scale stops being a risky leap and becomes a natural continuation of the model.