Decision tree chatbots tend to work better for simple and predictable questions, with little variation and no need for context. AI agents make more sense in operations with high volume, multiple exceptions, and the need to resolve cases from end to end.

If you are evaluating the use of chatbots to improve your company's customer service, understanding which model is right for your scenario is essential to avoid generating the opposite effect. Instead of gaining efficiency, a wrong decision can create more friction, overload the team, and compromise the customer experience.

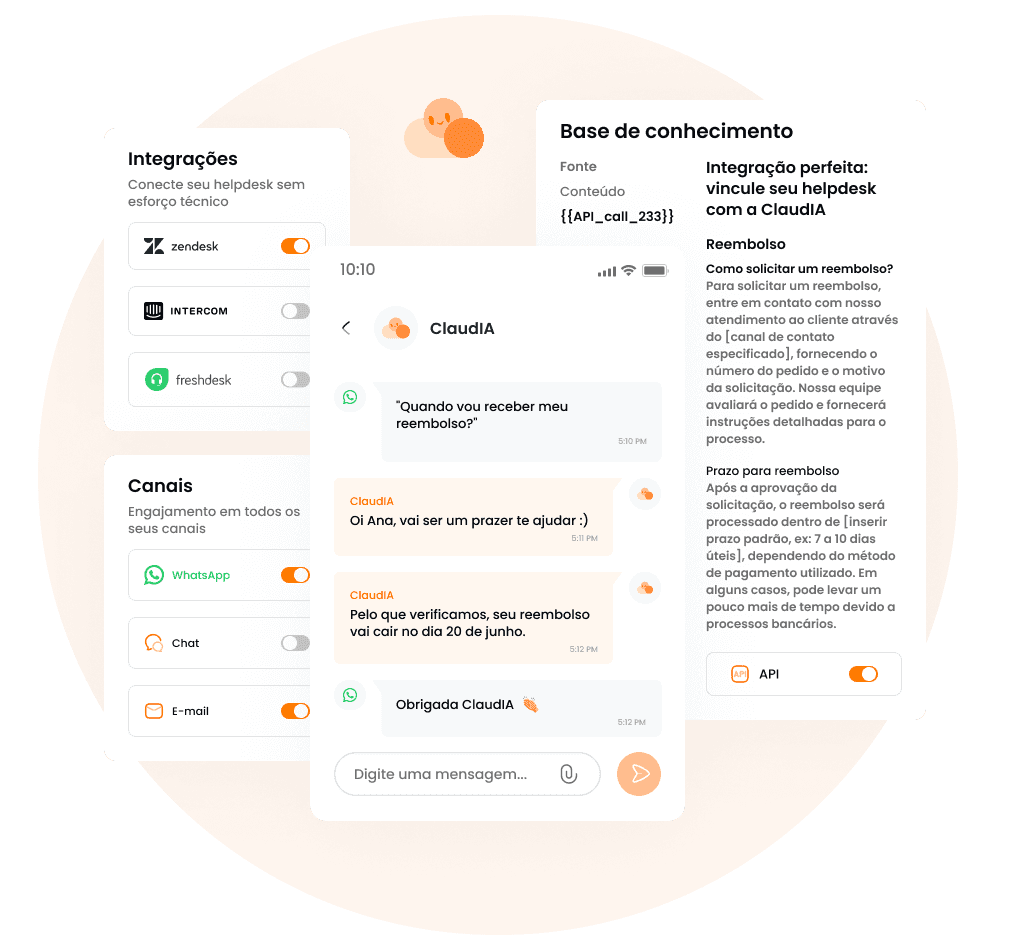

This impact appears even more quickly in critical channels, such as WhatsApp, which is preferred among users in Brazil and where the expectation for responses is immediate. With high contact volumes, the human team continues to absorb a good part of the customer service, operational costs increase, and the customer becomes frustrated with rigid responses.

When each approach makes more sense:

Decision tree chatbots work well when there are simple and repetitive questions, small or slightly variable catalogs, few exceptions, low integration needs with systems, and when a limited coverage is acceptable.

AI agents make more sense when there is a high volume of customer service, a large variety of cases and exceptions (edge cases), WhatsApp is a critical channel, and there is a need to understand context, execute actions, and evolve with use.

In practice, the most common path in real operations is to start with a clear focus (top intents), measure retention, escapes, and quality, and scale progressively, with governance.

What is a decision tree chatbot?

A decision-tree chatbot is an automated customer service system based on predefined flows. It guides the user through a sequence of questions and answers, usually in the form of buttons or menus, until it reaches a specific outcome. Each choice leads to a new path, like in a flowchart.

This type of chatbot does not understand context or “interpret” the customer's message. It only executes rules: if the user chooses A, it follows flow A; if they choose B, it goes to flow B. Everything the bot responds with must have been previously mapped, written, and maintained by someone on the team.

What is a decision tree chatbot?

An AI agent, unlike the previous model, understands the intention behind the message, considers the context of the conversation and decides how to respond or act, even when the request arrives incomplete, out of order, or written differently than expected.

While traditional chatbots fail when the customer goes off script and triggers the “I don’t understand” response, the AI agent can interpret language variations, pick up on information mentioned earlier, and maintain consistency throughout the conversation. The customer does not need to adapt to the bot; the bot adapts to the customer.

In addition to responding, an AI agent can consult knowledge bases, follow company-defined policies, perform actions in internal systems, and, when necessary, hand off to human support with clear context, history, and intent. The result is a smoother, less repetitive service that truly reduces operational effort instead of merely redistributing it.

When the decision tree is still better

Despite the limitations, decision-tree-based chatbots work best when the problem is simple, predictable, and not very variable. Therefore, this model is usually sufficient when:

The volume of questions is low or moderate, with little variation in how customers ask them.

The processes are fixed and well defined, with few exceptions or conditional decisions.

The bot's goal is only triage or routing, not full resolution.

The team is small and needs something easy to control, even if limited.

There is a need for extreme control over the response, whether due to regulatory risk, compliance, or lack of mature AI governance.

In these scenarios, the effort to implement and govern an AI agent may not pay off in the short term. A simple, well-designed flow with few paths can solve the problem without adding technical or operational complexity.

When the AI agent is clearly superior

The AI agent becomes the best choice when operations stop being predictable and start dealing with volume, variation, and context at the same time. In other words: when the problem is no longer “answering,” but solving end to end.

💡 McKinsey studies indicate that up to 70% of customer service contacts are repetitive, which makes rigid models difficult to sustain as volume grows.

This model stands out when:

There is a lot of repetitive contact volume, but with different ways of asking the same thing. The customer does not follow a script, and the bot needs to understand intent, not keywords.

WhatsApp is the main channel of the operation, with high volume, urgency, and low tolerance for friction. Long menus and rigid flows quickly break the experience.

The response depends on context: order, subscribed plan, customer history, previous status, or actions already taken in the conversation.

Support requires real actions, such as checking orders, generating a payment slip or PIX payment, opening tickets, changing customer records, or following internal processes.

The goal is to truly reduce human N1, freeing the team for more complex cases, and not just “hold” the customer for a few seconds before transferring.

In these scenarios, trying to scale with a decision tree usually produces the opposite effect: more frustration, more exceptions, and more human tickets. The AI agent, on the other hand, can absorb variation, learn from usage, and increase coverage over time — provided there is governance, monitoring, and a well-designed handoff, of course.

When the decision tree is still better

To help you decide which model works best for your operation, we’ve listed below the main criteria that CX/Support leaders usually analyze before making a decision. Take a look!

Criteria | Decision-tree chatbot | AI agent |

Real coverage | Handles only simple and predictable cases. Exceptions usually fall through to human support. | Resolves more cases end to end, even with variations in language and context. |

Maintenance | Done manually, it requires constant flow updates. Each exception becomes a new node. | Evolves through use and monitoring. Adjustments focus on knowledge base, policies, and intents. Therefore, less structural rework. |

Customer experience | High friction when the customer goes off script. Long menus and “I didn’t understand” are common. | More fluid conversation, adapted to the way the customer writes and asks. |

Deployment time | Fast at the start, but grows in complexity over time. | Can take a few days to weeks, depending on the knowledge base and integrations, but it starts out more complete. |

Integrations and actions | Limited or nonexistent. Usually informational. | Queries systems, performs actions, and follows real support workflows. |

Scalability | When volume doubles, complexity and maintenance effort double too. | Scales better with volume and variation, maintaining the same core structure. |

Governance and risk | Full control over the response, but little flexibility. | Requires governance (policies, auditing, fallback), but allows a balance between control and autonomy. |

Cost and predictability | Usually a fixed cost, even with low actual resolution. | Models vary (license, usage, or resolution), with the potential to align cost with outcome. |

The most common mistake: trying to “force AI” on top of a broken process

An AI agent does not fix structural customer service problems. When the knowledge base is weak, no one measures results, and the handoff to the human team is confusing, AI ends up becoming the scapegoat. The narrative becomes “AI is wrong,” when, in practice, the process was already not working before.

💡 Not by chance, Harvard Business Review points out that about 70% of AI projects fail when they try to scale without a clear scope, consistent data, and governance.

This mistake usually appears when the company skips some steps:

automates without knowing what the main contact reasons are;

does not define clear fallback criteria;

does not establish owners for the evolution of support.

The result is predictable: low resolution, customer frustration, and resistance from the internal team itself.

On the other hand, there are clear signs that the implementation has every chance of going well. Usually, these operations:

Have the top contact reasons well mapped, especially in N1.

Have a minimum knowledge base, even if it is not perfect.

Define a project owner, responsible for metrics, adjustments, and decisions.

Maintain a continuous routine of monitoring and improvement, looking at errors, escapes, and feedback from the human team.

When these elements are present, AI stops being a risky promise and becomes a predictable component of the operation. It is not about “turning on AI,” but about building a system that learns, evolves, and delivers results over time.

The most common mistake: trying to “force AI” on top of a broken process

The most common mistake is trying to automate everything at once. Successful operations start with a clear scope, focus on quick impact, and evolve based on data.

1. Map the main reasons for contact

List the top reasons for contact by channel to identify where the highest repetitive volume and human N1 effort are. Use ticket history, conversations, and operational reports.

2. Define the initial automation scope

Select only recurring cases, with a clear process and low risk. Not everything should go into automation at the start. A lean scope increases the chance of success and reduces frustration.

3. Prepare knowledge bases and AI limits

Create a minimal, up-to-date base aligned with company policies. Clearly define what the AI can resolve on its own and when it should hand off to a human.

4. Design the handoff and fallback criteria

The handoff is part of the experience. Establish when to escalate, which information should accompany the transfer, and how to avoid making the customer repeat everything from scratch.

5. Run a pilot with frequent auditing

Put the agent into production for a specific scope and closely monitor the conversations. In the first few days, auditing should be constant for quick adjustments.

6. Measure real impact

Track metrics such as retention, fallback rate, resolution time, complaints, team feedback, and CSAT/QA. These data points indicate whether the automation is truly working.

7. Scale with governance

With the pilot validated, expand to new intents and integrate real actions (lookups, bills, service tickets). The logic becomes continuous: measure, adjust, and scale while maintaining control and predictability.

How to implement it the right way (15–30 day plan)

As you can see, the right technology depends on the level of complexity of the operation, the volume of contacts, and the role customer service plays in business growth. Therefore, here are some recommendations:

Scenario A | Simple and predictable operation

If your customer service handles few contact reasons, low variation in the way people ask questions, and fixed processes, a decision-tree chatbot may be enough. It works well for informational cases, basic triage, and requests with very clear paths (as long as the scope is limited and well maintained).

Scenario B | Growing operation, WhatsApp-first and high volume

If WhatsApp is the main channel, the volume is growing without the intention of doubling the team, and support requests require context and real actions, the AI agent becomes the more efficient choice. In this scenario, it not only responds, but resolves, learns through use, and helps reduce the human “level 1” consistently.

But if you still have doubts, click here and carry out a diagnosis of your main contact reasons and the real automation potential of your operation.

Frequently asked questions

What is the main difference between a tree-based chatbot and an AI agent?

Tree-based chatbots follow fixed flows and predefined rules. AI agents understand the customer’s intent, consider context, and can adapt the response or perform actions without relying on rigid flows.

Does a decision-tree chatbot still work?

Yes, it works in simple and predictable scenarios, such as basic questions, triage, or fixed information.

Can an AI agent make mistakes in customer service?

It can, like any system or human. The difference is that AI agents work better with governance: clear limits, handoff to humans, and constant monitoring. The most common mistake is deploying AI without a knowledge base or without oversight.

Is it worth replacing a traditional chatbot with an AI agent?

It depends on the scenario. If customer service is simple and volume is low, the traditional chatbot may be enough. If there is high volume, WhatsApp is the main channel, and there is a need to resolve cases end to end, the AI agent tends to deliver better results.

Does an AI agent replace the support team?

No. It reduces the volume of repetitive interactions (L1) and frees the human team to handle more complex cases. In practice, AI acts as a scaling layer, not as a total replacement.

Does implementing an AI agent take a long time?

Not necessarily. Many operations start with a pilot in just a few weeks, focusing on the main reasons for contact. The key is to start small, measure results, and expand gradually.

Does a chatbot work well on WhatsApp?

Tree-based chatbots usually create friction on WhatsApp because customers expect to talk, not navigate menus. AI agents adapt better to this channel because they understand natural language and the context of the conversation.

How do you know if the chatbot is working well?

Containment rate (cases resolved by AI), fallback rate to a human, resolution time, complaints, and CSAT. If these indicators do not improve, automation is not generating real impact.

Final recommendation: what to choose in your case

Frequently Asked Questions

What is the main difference between a tree chatbot and an AI agent?

Tree chatbots follow fixed flows and predefined rules. AI agents understand the customer's intent, consider context, and can adapt the response or take actions without relying on rigid flows.

Does a decision tree chatbot still work?

Yes, it works in simple and predictable scenarios, such as basic inquiries, triage, or fixed information.

Can an AI agent make mistakes in service?

Yes, like any system or human. The difference is that AI agents perform better with governance: clear limits, handoffs to humans, and constant monitoring. The most common mistake is deploying AI without a knowledge base or oversight.

Is it worth replacing a traditional chatbot with an AI agent?

It depends on the scenario. If the service is simple and the volume is low, a traditional chatbot may be sufficient. If there is high volume, WhatsApp as the main channel, and a need for end-to-end case resolution, the AI agent tends to deliver better results.

Does an AI agent replace the support team?

No. It reduces the volume of repetitive inquiries (N1) and frees the human team for more complex cases. In practice, AI acts as a scaling layer, not a total replacement.

Does implementing an AI agent take a long time?

Not necessarily. Many operations start with a pilot in a few weeks, focusing on the main reasons for contact. The secret is to start small, measure results, and expand gradually.

Does the chatbot work well on WhatsApp?

Tree chatbots tend to create friction on WhatsApp because customers expect to converse, not navigate menus. AI agents adapt better to this channel as they understand natural language and the context of the conversation.

What to know if the chatbot is performing well?

Retention rate (cases resolved by AI), fallback rate to human, resolution time, complaints, and CSAT. If these indicators do not improve, automation is not making a real impact.