Decision tree chatbots tend to work better for simple and predictable questions, with little variation and no need for context. AI agents make more sense in operations with high volume, multiple exceptions, and the need to resolve cases from end to end.

If you are evaluating the use of chatbots to improve your company's customer service, understanding which model is right for your scenario is essential to avoid generating the opposite effect. Instead of gaining efficiency, a wrong decision can create more friction, overload the team, and compromise the customer experience.

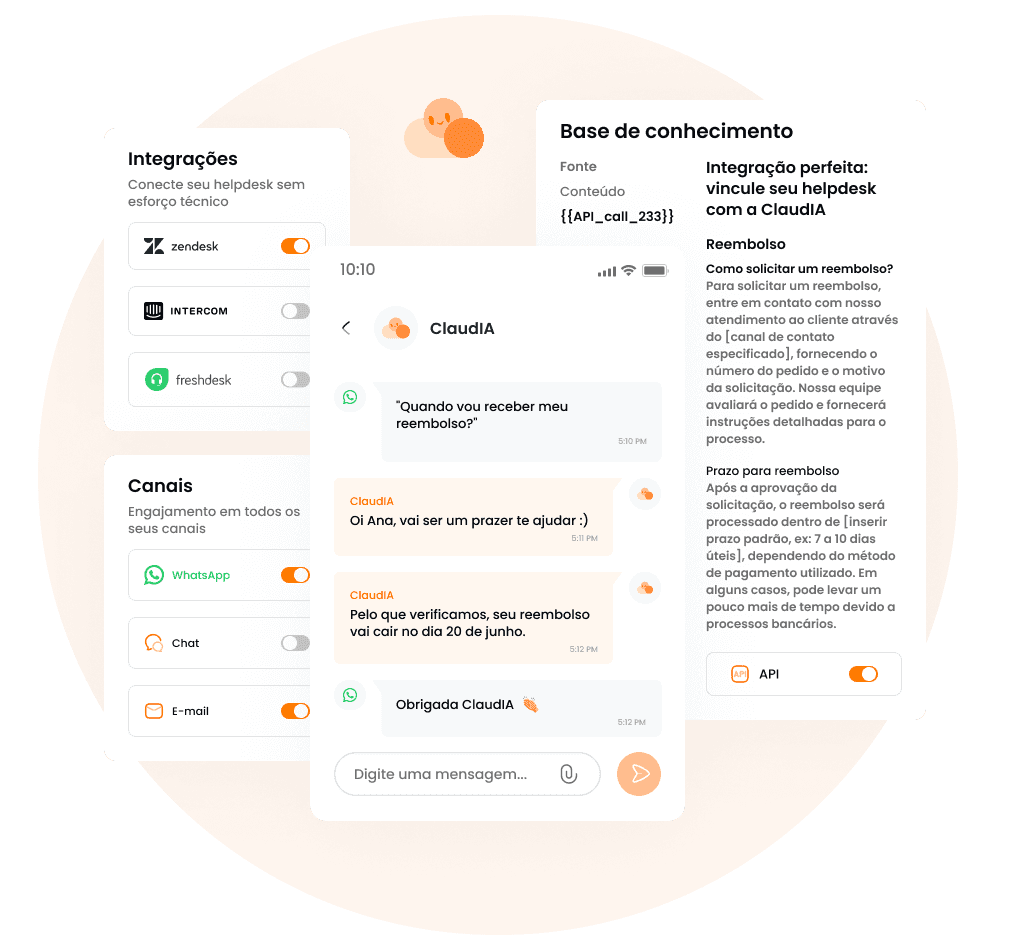

This impact appears even more quickly in critical channels, such as WhatsApp, which is preferred among users in Brazil and where the expectation for responses is immediate. With high contact volumes, the human team continues to absorb a good part of the customer service, operational costs increase, and the customer becomes frustrated with rigid responses.

When each approach makes more sense:

Decision tree chatbots work well when there are simple and repetitive questions, small or slightly variable catalogs, few exceptions, low integration needs with systems, and when a limited coverage is acceptable.

AI agents make more sense when there is a high volume of customer service, a large variety of cases and exceptions (edge cases), WhatsApp is a critical channel, and there is a need to understand context, execute actions, and evolve with use.

In practice, the most common path in real operations is to start with a clear focus (top intents), measure retention, escapes, and quality, and scale progressively, with governance.

O que é um chatbot de árvore de decisão?

Um chatbot de árvore de decisão é um sistema de atendimento automatizado baseado em fluxos pré-definidos. Ele guia o usuário por uma sequência de perguntas e respostas, normalmente em formato de botões ou menus, até chegar a um desfecho específico. Cada escolha leva a um novo caminho, como em um fluxograma.

Esse tipo de chatbot não entende o contexto nem “interpreta” a mensagem do cliente. Ele apenas executa regras: se o usuário escolhe A, segue para o fluxo A; se escolhe B, vai para o fluxo B. Tudo o que o bot responde precisa ter sido previamente mapeado, escrito e mantido por alguém do time.

What is a decision tree chatbot?

A decision tree chatbot is an automated service system based on pre-defined flows. It guides the user through a sequence of questions and answers, usually in the form of buttons or menus, until reaching a specific outcome. Each choice leads to a new path, like in a flowchart.

This type of chatbot does not understand the context nor “interprets” the customer's message. It merely executes rules: if the user chooses A, it follows the A flow; if they choose B, it goes to the B flow. Everything the bot responds needs to have been previously mapped, written, and maintained by someone on the team.

What is an AI agent in service

An AI agent, unlike the previous model, understands the intention behind the message, considers the context of the conversation, and decides how to respond or act, even when the request is incomplete, out of order, or written differently than expected.

While traditional chatbots fail when the customer strays from the script and generates the “I didn’t understand” response, the AI agent can interpret variations in language, recall previously mentioned information, and maintain coherence throughout the conversation. The customer does not need to adapt to the bot; the bot adapts to the customer.

In addition to responding, an AI agent can consult knowledge bases, follow policies defined by the company, execute actions in internal systems, and, when necessary, make a handoff to human service with clear context, history, and intention. The result is a more fluid, less repetitive service that actually reduces operational effort instead of merely redistributing it.

Quando a árvore de decisão ainda é melhor

Apesar das limitações, chatbots baseados em árvore de decisão funcionam melhor quando o problema é simples, previsível e pouco variável. Por isso, esse modelo costuma ser suficiente quando:

O volume de dúvidas é baixo ou moderado, com pouca variação na forma como os clientes perguntam.

Os processos são fixos e bem definidos, sem muitas exceções ou decisões condicionais.

O objetivo do bot é apenas triagem ou direcionamento, não resolução completa.

O time é pequeno e precisa de algo fácil de controlar, mesmo que limitado.

Existe necessidade de controle extremo da resposta, seja por risco regulatório, compliance ou falta de governança de IA madura.

Nesses cenários, o esforço de implementar e governar um agente de IA pode não se pagar no curto prazo. Um fluxo simples, bem desenhado e com poucos caminhos pode resolver o problema sem adicionar complexidade técnica ou operacional.

Quando o agente de IA é claramente superior

O agente de IA passa a ser a melhor escolha quando a operação deixa de ser previsível e começa a lidar com volume, variação e contexto ao mesmo tempo. Em outras palavras: quando o problema não é mais “responder”, mas resolver de ponta a ponta.

💡 Estudos da McKinsey indicam que até 70% dos contatos de atendimento são repetitivos, o que torna modelos rígidos difíceis de sustentar conforme o volume cresce.

Esse modelo se destaca quando:

Existe muito volume de contatos repetitivos, mas com diversas formas de perguntar a mesma coisa. O cliente não segue roteiro e o bot precisa entender a intenção, não palavra-chave.

O WhatsApp é o canal principal da operação, com alto volume, urgência e baixa tolerância à fricção. Menus longos e fluxos rígidos quebram a experiência rapidamente.

A resposta depende de contexto: pedido, plano contratado, histórico do cliente, status anterior ou ações já realizadas na conversa.

O atendimento exige ações reais, como consultar pedidos, gerar boleto ou PIX, abrir chamados, alterar cadastro ou seguir processos internos.

A meta é reduzir N1 humano de verdade, liberando o time para casos mais complexos, e não apenas “segurar” o cliente por alguns segundos antes de transferir.

Nesses cenários, tentar escalar com árvore de decisão costuma gerar o efeito contrário: mais frustração, mais exceções e mais tickets humanos. O agente de IA, por outro lado, consegue absorver variação, aprender com o uso e aumentar a cobertura ao longo do tempo — desde que exista governança, monitoria e um handoff bem desenhado, é claro.

When the decision tree is still better

Despite the limitations, decision tree-based chatbots work better when the problem is simple, predictable, and low variability. Therefore, this model is often sufficient when:

The volume of inquiries is low or moderate, with little variation in how customers ask questions.

The processes are fixed and well-defined, with few exceptions or conditional decisions.

The bot's objective is only triage or routing, not complete resolution.

The team is small and needs something easy to control, even if limited.

There is a need for extreme control over the response, whether due to regulatory risk, compliance, or lack of mature AI governance.

In these scenarios, the effort to implement and govern an AI agent may not pay off in the short term. A simple, well-designed flow with few paths can solve the problem without adding technical or operational complexity.

When the AI agent is clearly superior

The AI agent becomes the best choice when the operation ceases to be predictable and begins to handle volume, variation, and context simultaneously. In other words: when the problem is no longer “responding,” but solving end-to-end.

💡 McKinsey studies indicate that up to 70% of customer service contacts are repetitive, making rigid models difficult to sustain as volume increases.

This model stands out when:

There is a lot of repetitive contact volume, but with various ways to ask the same thing. The customer does not follow a script, and the bot needs to understand intent, not keywords.

The WhatsApp is the main channel of the operation, with high volume, urgency, and low tolerance for friction. Long menus and rigid flows quickly break the experience.

The response depends on context: order, contracted plan, customer history, prior status, or actions already taken in the conversation.

The service requires real actions, such as checking orders, generating invoices or PIX, opening tickets, changing registration, or following internal processes.

The goal is to reduce real N1 human, freeing the team for more complex cases, and not just “holding” the customer for a few seconds before transferring.

In these scenarios, trying to scale with a decision tree usually generates the opposite effect: more frustration, more exceptions, and more human tickets. The AI agent, on the other hand, can absorb variation, learn from use, and increase coverage over time — as long as there is governance, monitoring, and a well-designed handoff, of course.

Practical comparison: 8 criteria for decision-making

To help you decide which model works best for your operation, we brought below the main criteria that CX/Support leaders usually analyze before making a decision. Take a look!

Criterion | Decision Tree Chatbot | AI Agent |

Real coverage | Only resolves simple and predictable cases. Exceptions often fall on human service. | Resolves more end-to-end cases, even with variations in language and context. |

Maintenance | Done manually, requires constant updating of flows. Each exception becomes a new node. | Evolves with use and monitoring. Adjustments focus on base, policies, and intents. Thus, less structural rework. |

Customer experience | High friction when the customer deviates from the script. Long menus and “I didn’t understand” are common. | Smoother conversation, adapted to how the customer writes and asks. |

Implementation time | Quick at first, but grows in complexity over time. | Can take days to weeks, depending on the base and integrations, but starts off more complete. |

Integrations and actions | Limited or nonexistent. Generally informational. | Consults systems, executes actions, and follows real service processes. |

Scalability | When volume doubles, the complexity and maintenance effort double as well. | Scales better with volume and variation, maintaining the same base structure. |

Governance and risk | Total control over the response, but little flexibility. | Requires governance (policies, auditing, fallback), but allows a balance between control and autonomy. |

Cost and predictability | Generally a fixed cost, even with low real resolution. | Models vary (license, usage, or resolution), with the potential to align cost with results. |

O erro mais comum: tentar “forçar IA” em cima de processo quebrado

Um agente de IA não corrige problemas estruturais do atendimento. Quando a base de conhecimento é fraca, ninguém mede resultados e o handoff para o time humano é confuso, a IA acaba virando o bode expiatório. O discurso vira “a IA erra”, quando, na prática, o processo já não funcionava antes.

💡 Não por acaso, a Harvard Business Review aponta que cerca de 70% dos projetos de IA falham quando tentam escalar sem escopo claro, dados consistentes e governança.

Esse erro costuma aparecer quando a empresa pula algumas etapas:

automatiza sem saber quais são os principais motivos de contato;

não define critérios claros de fallback;

não estabelece responsáveis pela evolução do atendimento.

O resultado é previsível: baixa resolução, frustração do cliente e resistência do próprio time interno.

Por outro lado, existem sinais claros de que a implementação tem tudo para dar certo. Normalmente, essas operações:

Têm os top motivos de contato bem mapeados, especialmente no N1.

Possuem uma base mínima de conhecimento, mesmo que não seja perfeita.

Definem um dono do projeto, responsável por métricas, ajustes e decisões.

Mantêm uma rotina contínua de monitoria e melhoria, olhando para erros, escapes e feedback do time humano.

Quando esses elementos estão presentes, a IA deixa de ser uma promessa arriscada e passa a ser um componente previsível da operação. Não se trata de “ligar a IA”, mas de construir um sistema que aprende, evolui e entrega resultado ao longo do tempo.

The most common mistake: trying to “force AI” on top of a broken process

An AI agent does not fix structural issues in service. When the knowledge base is weak, no one measures results, and the handoff to the human team is confusing, AI ends up becoming the scapegoat. The discourse turns into "AI makes mistakes," when in practice, the process was already not functioning before.

💡 Not coincidentally, Harvard Business Review points out that about 70% of AI projects fail when they try to scale without a clear scope, consistent data, and governance.

This mistake usually appears when the company skips some steps:

automates without knowing what the main reasons for contact are;

does not define clear fallback criteria;

does not establish responsible parties for the evolution of the service.

The result is predictable: low resolution, customer frustration, and resistance from the internal team itself.

On the other hand, there are clear signs that the implementation has everything to succeed. Typically, these operations:

Have the top reasons for contact well mapped, especially at N1.

Have a minimum knowledge base, even if it is not perfect.

Define a project owner, responsible for metrics, adjustments, and decisions.

Maintain a continuous monitoring and improvement routine, looking at errors, escapes, and feedback from the human team.

When these elements are present, AI ceases to be a risky promise and becomes a predictable component of the operation. It is not about "turning on AI," but about building a system that learns, evolves, and delivers results over time.

How to implement it the right way (15–30 day plan)

Perguntas frequentes

Qual a principal diferença entre chatbot de árvore e agente de IA?

Chatbots de árvore seguem fluxos fixos e regras pré-definidas. Agentes de IA entendem a intenção do cliente, consideram contexto e conseguem adaptar a resposta ou executar ações, sem depender de fluxos rígidos.

Chatbot de árvore de decisão ainda funciona?

Sim, funciona em cenários simples e previsíveis, como dúvidas básicas, triagem ou informações fixas.

Agente de IA pode errar no atendimento?

Pode, como qualquer sistema ou humano. A diferença é que agentes de IA funcionam melhor com governança: limites claros, handoff para humanos e monitoria constante. O erro mais comum é colocar IA para rodar sem base de conhecimento ou sem acompanhamento.

Vale a pena trocar um chatbot tradicional por um agente de IA?

Depende do cenário. Se o atendimento é simples e o volume é baixo, o chatbot tradicional pode ser suficiente. Se há alto volume, tem o WhatsApp como canal principal e necessidade de resolver casos de ponta a ponta, o agente de IA tende a trazer mais resultado.

Agente de IA substitui o time de atendimento?

Não. Ele reduz o volume de atendimentos repetitivos (N1) e libera o time humano para casos mais complexos. Na prática, a IA atua como uma camada de escala, não como substituição total.

Implementar agente de IA demora muito?

Não necessariamente. Muitas operações começam com um piloto em poucas semanas, focando nos principais motivos de contato. O segredo é começar pequeno, medir resultados e expandir gradualmente.

Chatbot funciona bem no WhatsApp?

Chatbots de árvore costumam gerar fricção no WhatsApp, porque o cliente espera conversar, não navegar por menus. Agentes de IA se adaptam melhor a esse canal, por entenderem linguagem natural e contexto da conversa.

O que saber se o chatbot está funcionando bem?

Taxa de retenção (casos resolvidos pela IA), taxa de fallback para humano, tempo de resolução, reclamações e CSAT. Se esses indicadores não melhoram, a automação não está gerando impacto real.

Final recommendation: what to choose in your case

Frequently Asked Questions

What is the main difference between a tree chatbot and an AI agent?

Tree chatbots follow fixed flows and predefined rules. AI agents understand the customer's intent, consider context, and can adapt the response or take actions without relying on rigid flows.

Does a decision tree chatbot still work?

Yes, it works in simple and predictable scenarios, such as basic inquiries, triage, or fixed information.

Can an AI agent make mistakes in service?

Yes, like any system or human. The difference is that AI agents perform better with governance: clear limits, handoffs to humans, and constant monitoring. The most common mistake is deploying AI without a knowledge base or oversight.

Is it worth replacing a traditional chatbot with an AI agent?

It depends on the scenario. If the service is simple and the volume is low, a traditional chatbot may be sufficient. If there is high volume, WhatsApp as the main channel, and a need for end-to-end case resolution, the AI agent tends to deliver better results.

Does an AI agent replace the support team?

No. It reduces the volume of repetitive inquiries (N1) and frees the human team for more complex cases. In practice, AI acts as a scaling layer, not a total replacement.

Does implementing an AI agent take a long time?

Not necessarily. Many operations start with a pilot in a few weeks, focusing on the main reasons for contact. The secret is to start small, measure results, and expand gradually.

Does the chatbot work well on WhatsApp?

Tree chatbots tend to create friction on WhatsApp because customers expect to converse, not navigate menus. AI agents adapt better to this channel as they understand natural language and the context of the conversation.

What to know if the chatbot is performing well?

Retention rate (cases resolved by AI), fallback rate to human, resolution time, complaints, and CSAT. If these indicators do not improve, automation is not making a real impact.